Contents

- Intro

- Overview

- Log Analytics Setup

- Agent Deployment

- Scripted Agent Deployment

- Solutions

- Log Queries

- Alerts

- Monitor Metrics

- Summary

As organizations transition from server-based infrastructure to containers, serverless computing, and PaaS/SaaS offerings in the cloud, our monitoring and operations tools must evolve, as well. Every organization has different needs and unique requirements to support their applications and business operations, so it’s important to use a tool that can adequately satisfy modern cloud and datacenter monitoring requirements.

It’s no secret that a plethora of monitoring tools already exist. Traditional Microsoft datacenters might be running System Center Operations Manager, while others may rely on tools like SolarWinds to monitor up/down and network connectivity across their environment. Several other IaaS and SaaS solutions exist to do the same. This post is not meant to compare and contrast these solutions, but rather get you started with Azure Log Analytics and Azure Monitor as an addition or replacement to your existing monitoring solutions. This may be particularly helpful for those wanting to transition from SCOM.

Overview

Azure Log Analytics is a SaaS solution offered by Microsoft that ingests logs and metrics from your infrastructure. This ingestion of data serves as the framework for several other services like Azure Monitor and Azure Sentinel. The imported data from logs and metrics is available immediately to be analyzed or processed by alert rules (more on that later). Some of the key benefits of Log Analytics include:

- A serverless, centralized collection of logs and metrics

- Full support for VMs running anywhere (on-prem, Azure, other clouds)

- Broad OS support (full list here)

- Very simple deployment for VMs running in Azure

- Powerful notification/automation capabilities using Azure Alerts

- Simple connectivity requirements

- Very attractive pricing options

Like most monitoring solutions, a small agent is used to gather this data and direct it to one or more Log Analytics workspace. If you’re a SCOM admin, this is the same Microsoft Monitoring Agent used to forward this data to your SCOM management server. By plugging your agent into a Log Analytics workspace, you bypass any major network requirements by forwarding collected data directly to Azure through an encrypted SSL connection. You also have the option to use a proxy/gateway connection if needed.

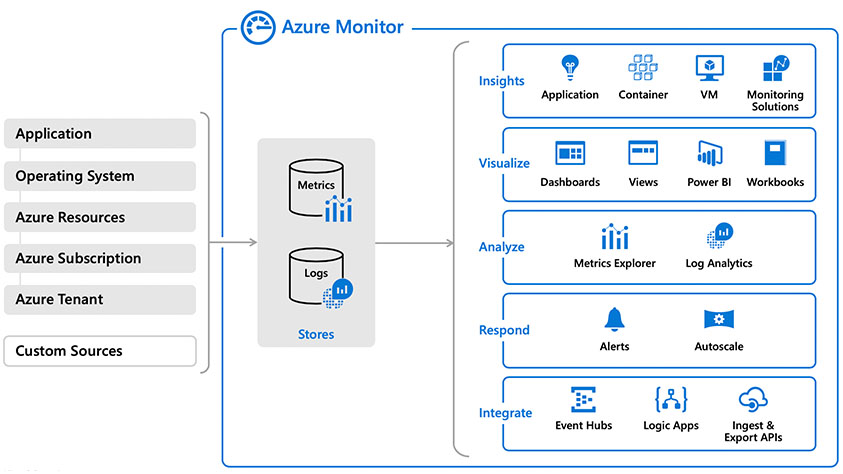

Looking at the components of Azure Monitor, you begin to see the big picture behind gathering data, trying to visualize and analyze it, responding to conditions, and integrating it with Azure services. All of it is built on Log Analytics and Metrics. Be sure to check out Microsoft’s overview of Azure Monitor.

Log Analytics Setup

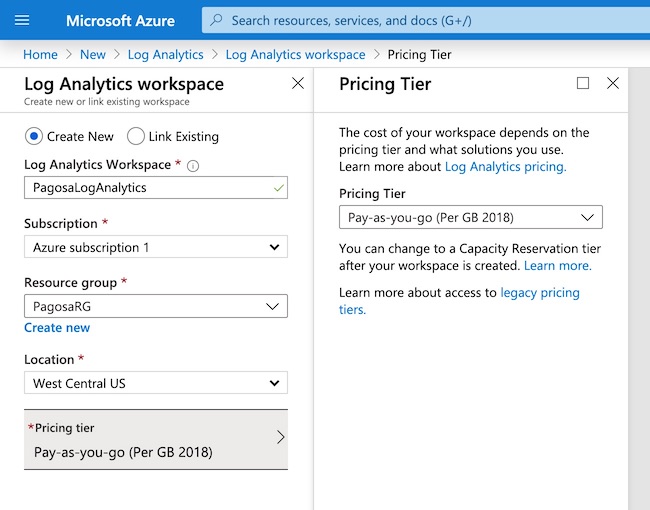

To start, you will need a Log Analytics workspace configured in Azure. You can create this easily through the Azure portal by choosing Log Analytics Workspace from the Marketplace. Choose the Location and Resource Group that works best for your configuration. There are several pricing options available if you have an EA or OMS credits, but we will use pay-as-you-go pricing.

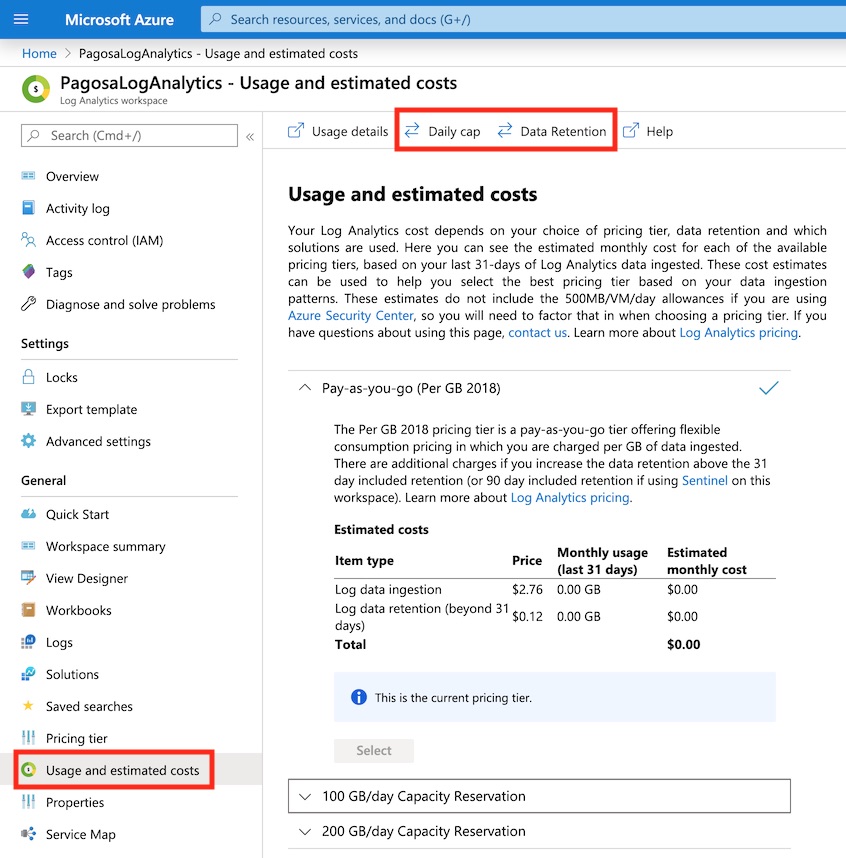

Now that the Log Analytics workspace is deployed, let’s look at the configuration settings. You will want to check out Usage and Estimated Costs if you need to limit how much data is collected or how long it is retained for. You can cap your data ingestion at a determined level to help reign in the costs. All ingested data is kept for a month at minimum, but you can increase the retention time if desired. Historical VM performance metrics is a good example of why you may want to increase the retention range, but it will increase the cost for your workspace. More info on pricing is available from Microsoft here.

You can get much more accurate information about the amount of data that is ingested and the cost associated with it once logs and metrics have been ingested for a few days or up to a month, ideally. This will give you a better picture of your burn rate and how to better tune your data collection settings.

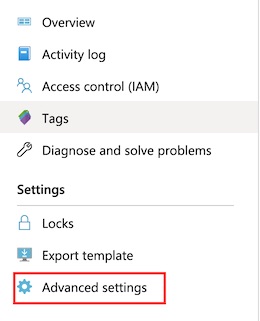

Open the Advanced Settings to retrieve information about your workspace.

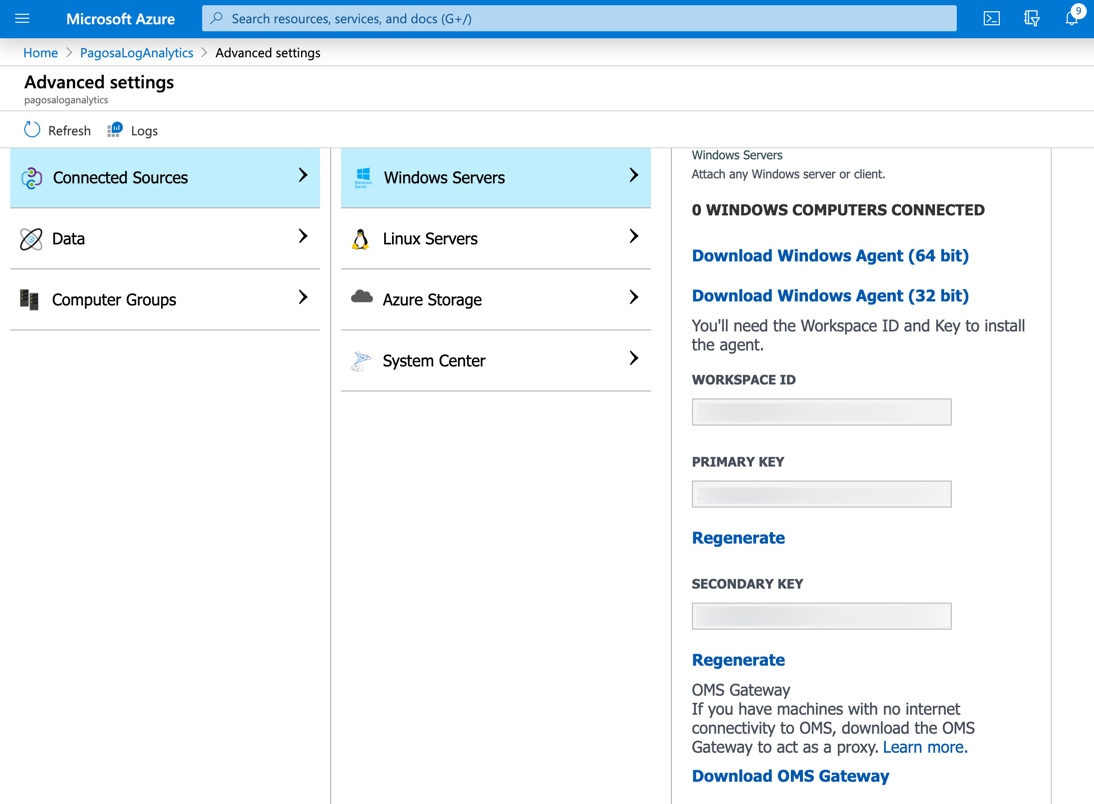

In Advanced Settings, you will find important information such as the workspace ID and the Key required to associate an agent to a workspace. We will use this ID and Key in a later step for a manual agent installation.

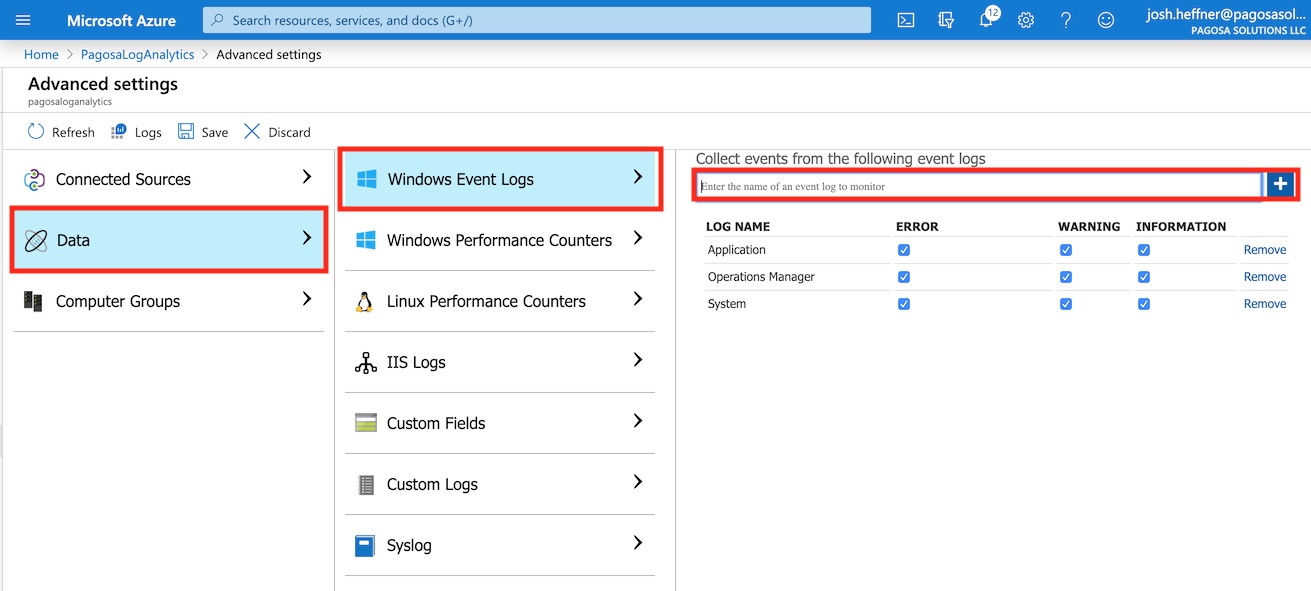

We also need to configure what types of logs are ingested into the workspace. These are not added by default, but they do determine what kinds of log data you will be able to query and create alerts for. In this example, we will collect all event logs related to Application, System, and Operations Manager. If you’re not sure what to start with, this is a pretty good data set to use initially. Note that the more logs you collect here, the more data you will ingest, resulting in an increase in cost. A list of available event record properties can be found from Microsoft here.

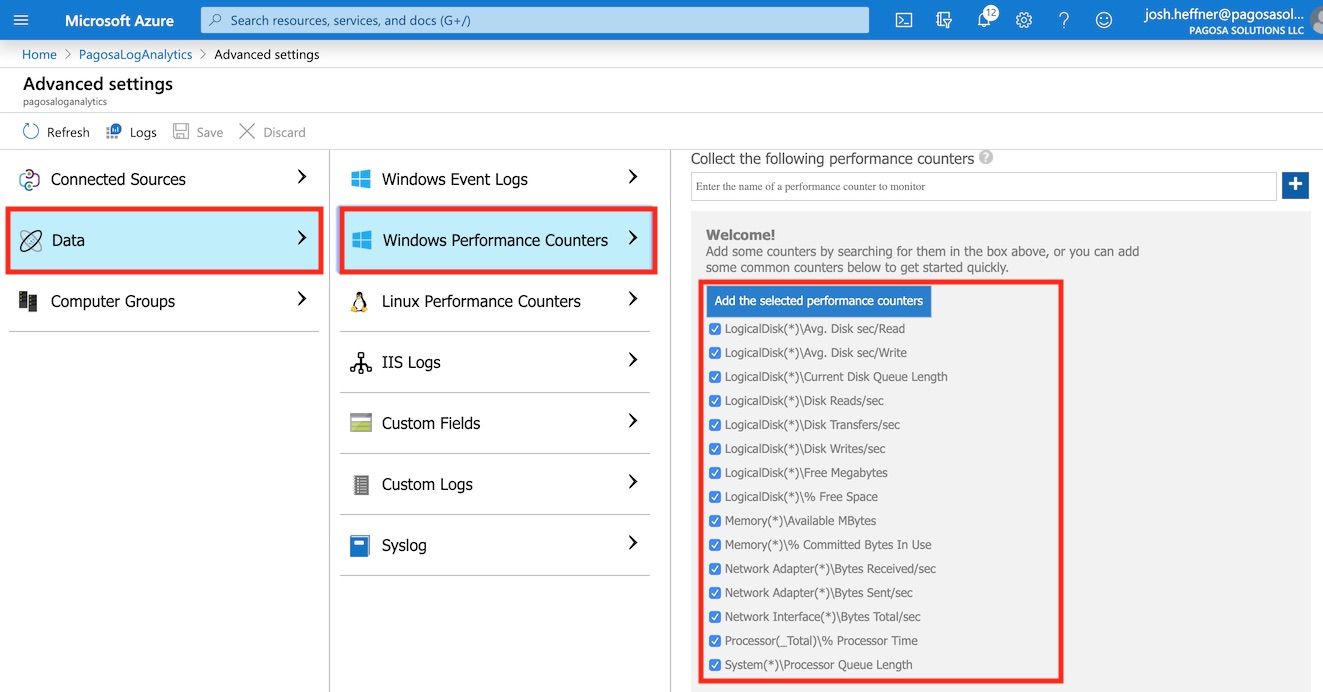

You should also add data for Windows Performance Counters if you want to monitor metrics like system heartbeat, resource utilization, disk space, etc. The default recommended counters work as a great starting point, but additional counters like memory utilization are available. Click Save, and Log Analytics will start ingesting data from these logs and counters. A list of available performance counters can be found from Microsoft here.

Agent Deployment

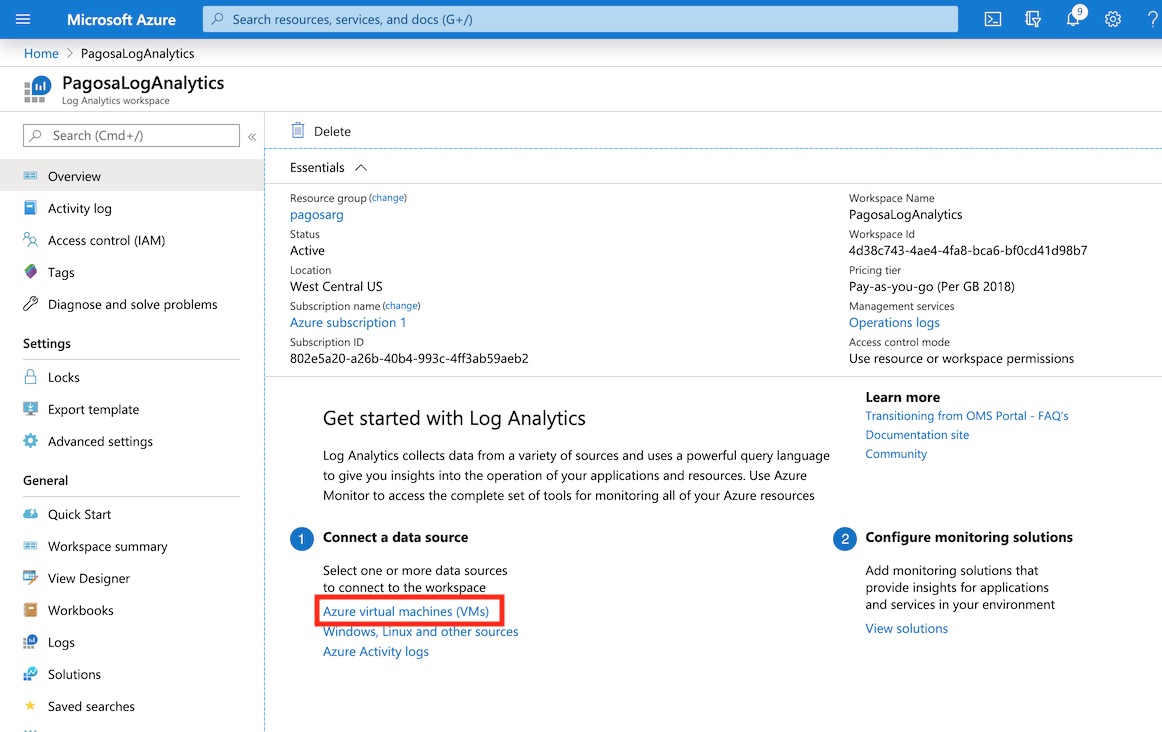

There are a few different ways to deploy the agent required for Log Analytics. Let’s start with the easiest. To deploy the agent to a VM running inside your Azure subscription, choose Azure VM from the “Connect to a Data Source” step.

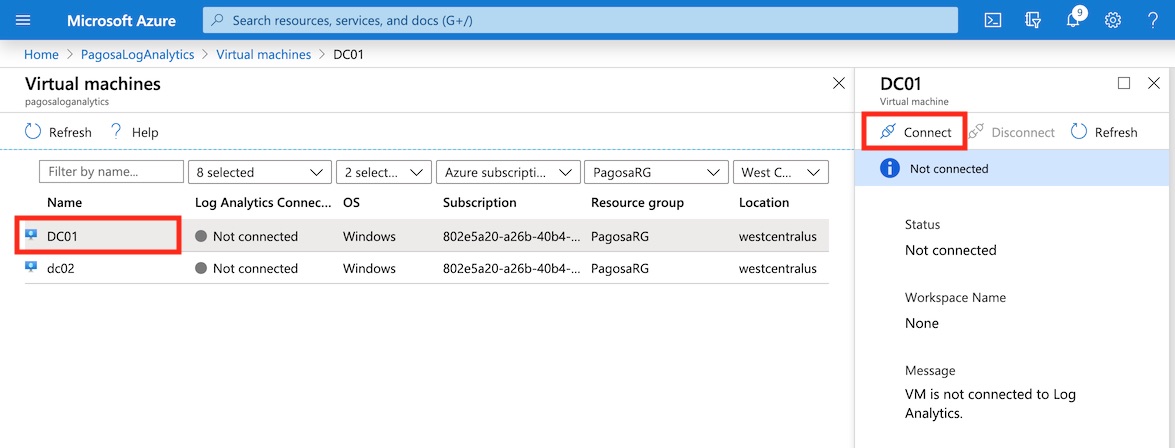

A list of VMs in your Azure subscription will be available to select and connect to your workspace. We’ll add DC01 in this example. You could also do this automatically through a policy.

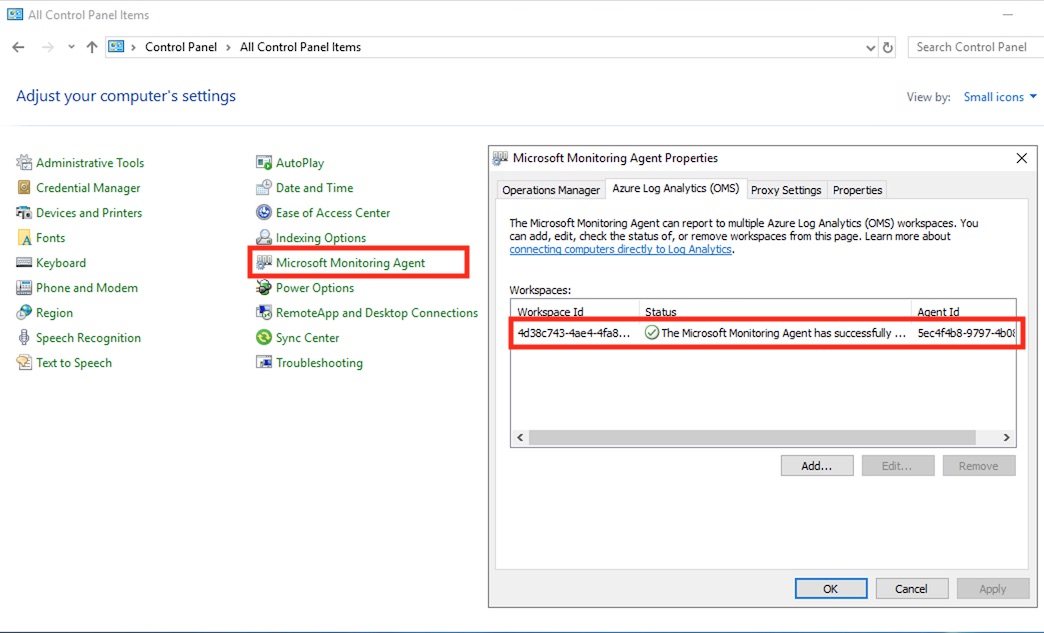

Deploying to Azure VMs directly from the workspace automates a few steps that we will go through manually next. It adds the Microsoft Monitoring Agent extension to the Azure VM in Resource Manager. It also automates the install of the agent inside the OS, and then it connects the agent to the Workspace as seen below.

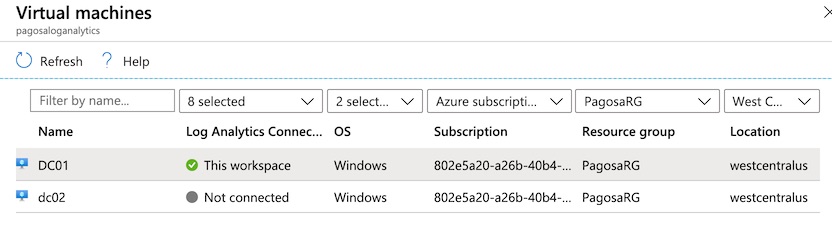

It usually takes about 5 minutes to complete the process. The agent will show as connected to “this workspace” when complete.

Note: only VMs with the Microsoft Monitoring Agent extension added will show as connected in this list. Manually installed agents might not show as connected, but they will work.

Manual agent installations can be done for any VM running outside of your Azure subscription. They can be running on-premises or even in another public cloud. There are no domain or complex connectivity requirements. The agent download for Windows/Linux can be found in the Advanced Settings blade or below:

- Windows 64-bit agent – https://go.microsoft.com/fwlink/?LinkId=828603

- Windows 32-bit agent – https://go.microsoft.com/fwlink/?LinkId=828604

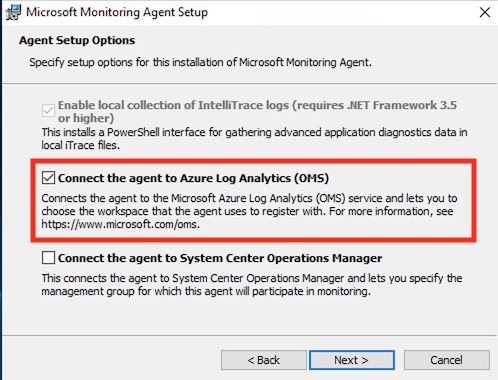

Start the agent installation and check the box to connect it to Azure Log Analytics.

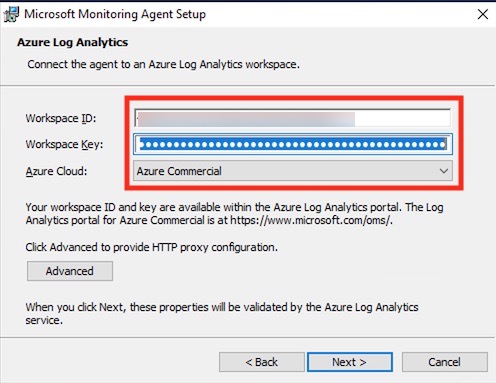

Enter the workspace ID and key from the Advanced Settings blade from the previous step to complete the installation.

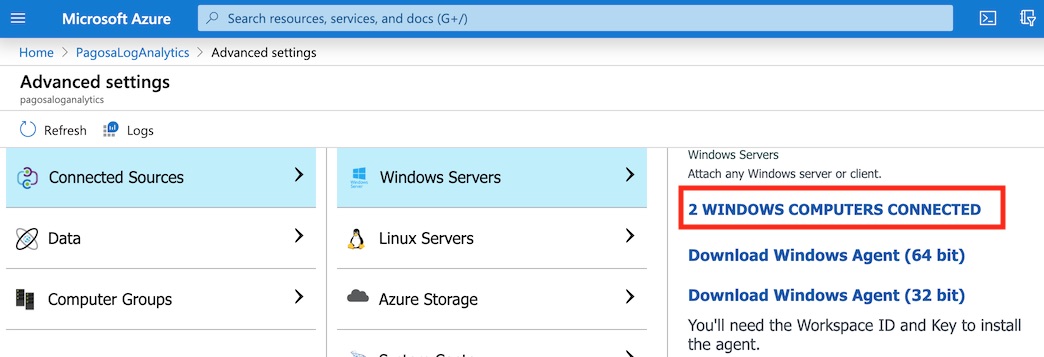

In a moment, you will be able to see the newly-connected agents under Connected Sources in Advanced Settings.

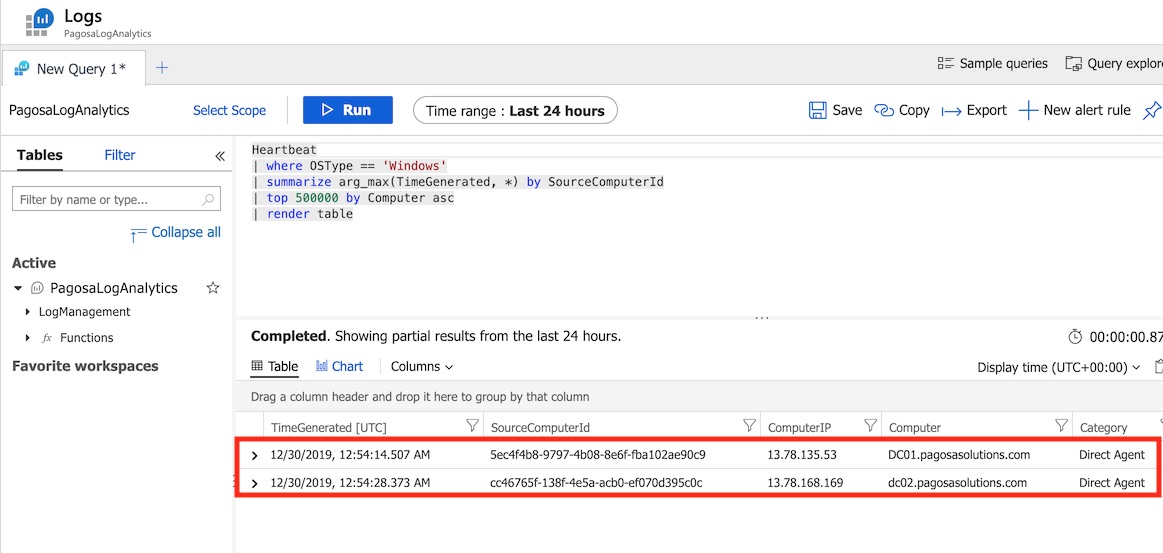

If you click the “Computers Connected” link, a Log Analytics Query will run and display a list of connected agents. This also happens to be your first query which is running against ingested log data. This blade is opened from the “Logs” menu item in your Log Analytics workspace. Queries are used to quickly retrieve relevant information – we will explore this more in-depth in a moment.

Scripted Agent Deployment

A silent and scripted installer will be helpful in environments with several systems to connect. First, extract the agent installer in the previous step using the following command:

MMASetup-AMD64.exe /c

The following command will install the agent silently – just add your workspace ID and Key.

setup.exe /qn /norestart NOAPM=1 ADD_OPINSIGHTS_WORKSPACE=1 OPINSIGHTS_WORKSPACE_AZURE_CLOUD_TYPE=0 OPINSIGHTS_WORKSPACE_ID=”<your workspace ID>” OPINSIGHTS_WORKSPACE_KEY=”<your workspace key>” AcceptEndUserLicenseAgreement=1

Additional scripted install options can be found from Microsoft here.

In SCOM environments, you may already have the agent deployed and just need to connect the existing agent to a Log Analytics Workspace. This PowerShell script will connect an existing agent install to a workspace – just update it with your workspace ID and Key. It will also install the agent if missing. Credit to John Savill for the original script.

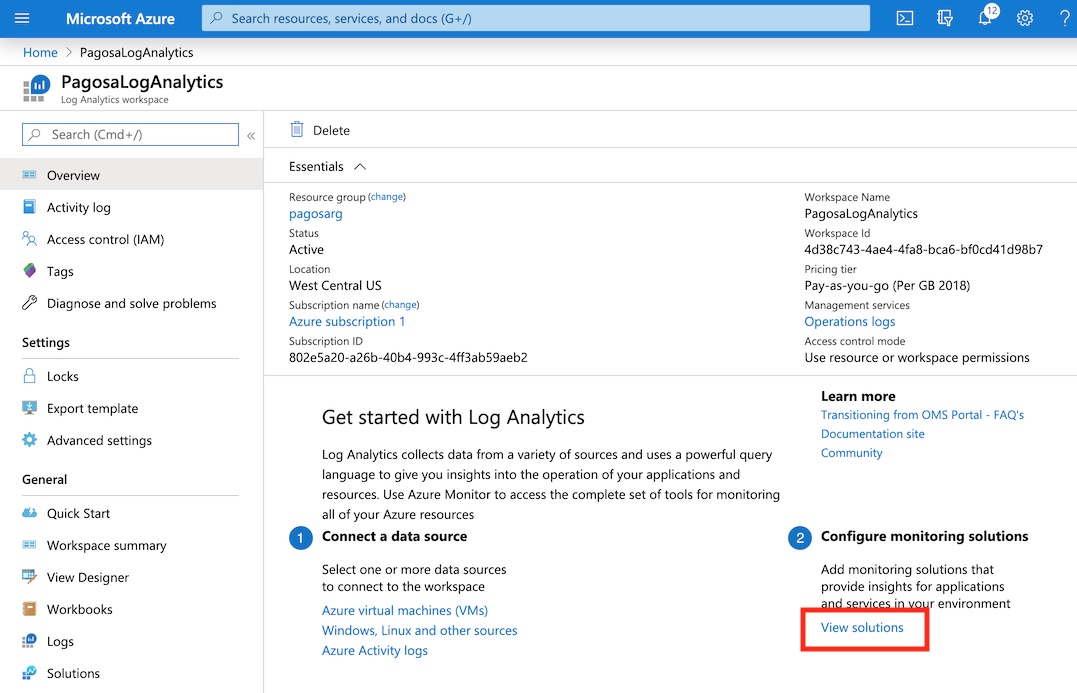

Solutions

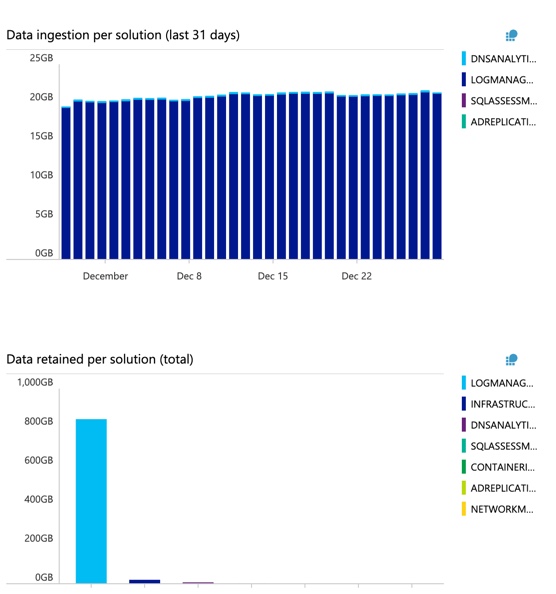

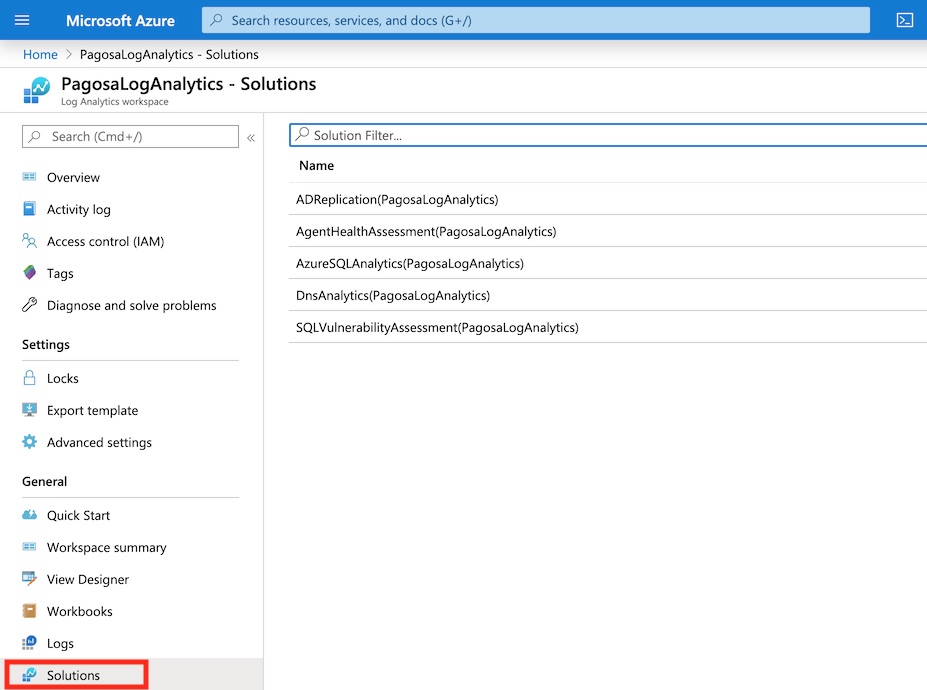

Solutions are pre-developed dashboards and queries for Log Analytics that are designed to provide valuable information for specific area such as Active Directory, SQL, or DNS for example. You don’t have to use them, but they can help you get started quickly in specific areas. Open “View Solutions” to browse the Azure Marketplace for available solutions for Log Analytics. It’s as simple as attaching a solution to your existing workspace.

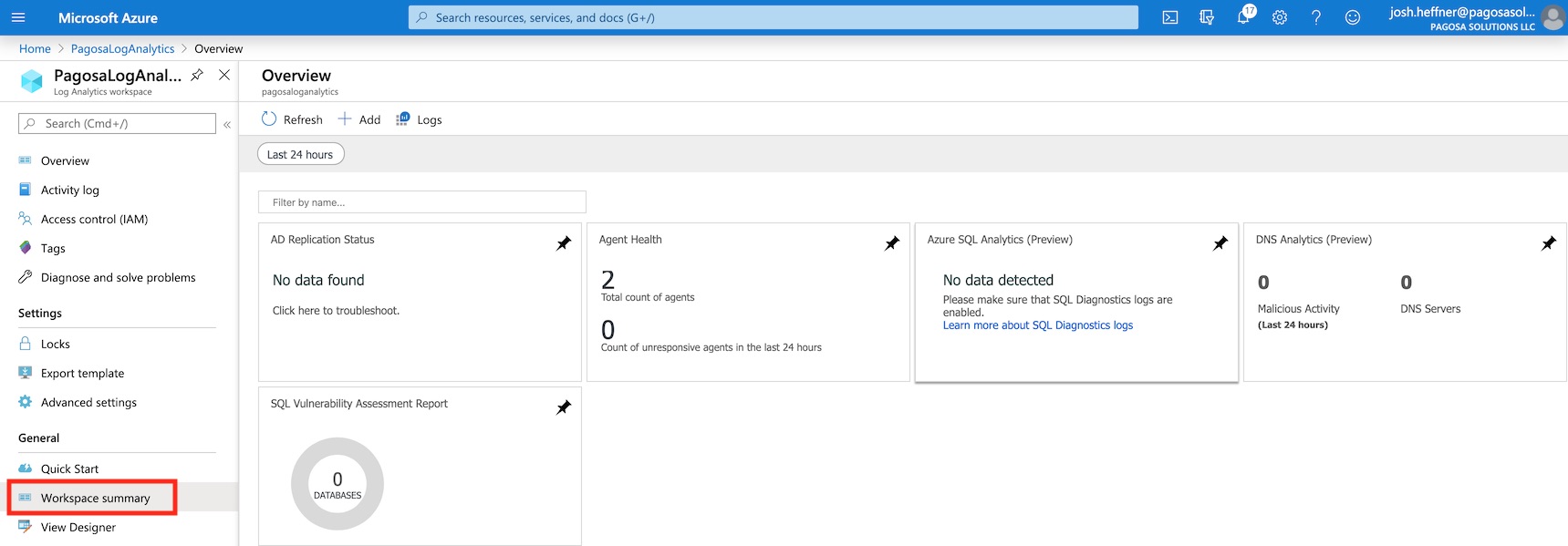

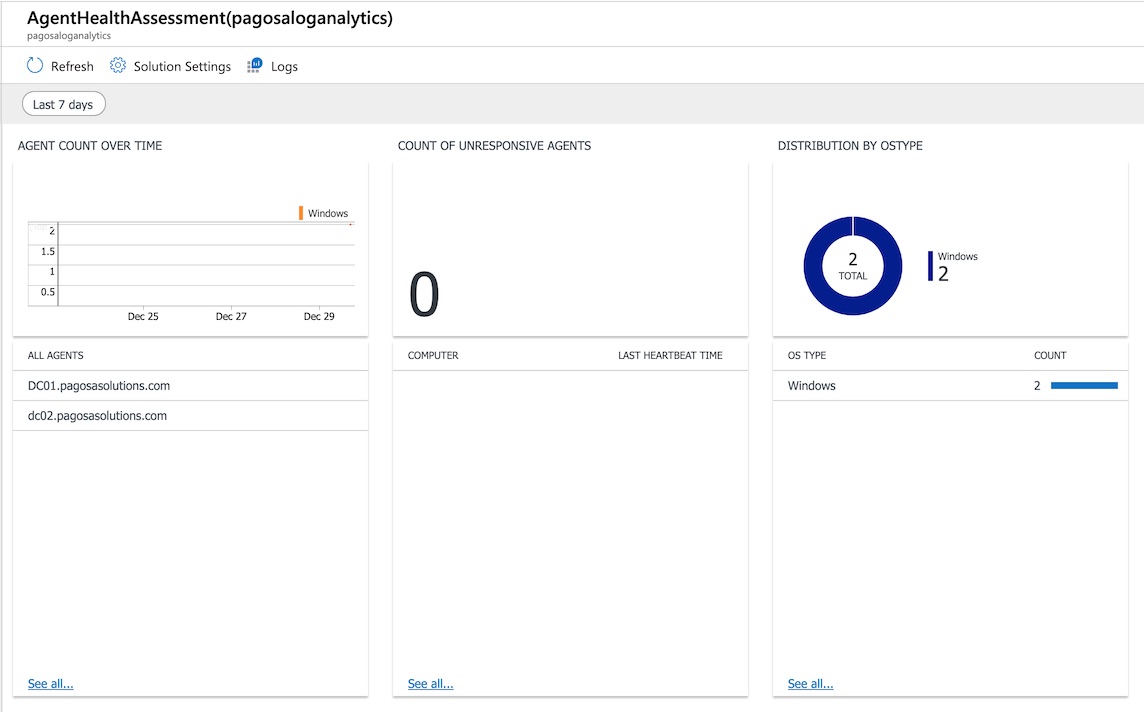

A few of the solutions I regularly use can be seen below.

Dashboards from Solutions can be found under the workspace summary.

And each solution tile will bring up additional data that may be useful.

Log Queries

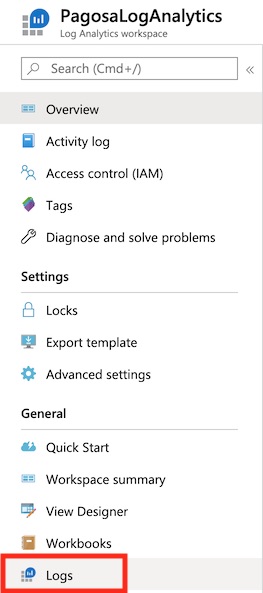

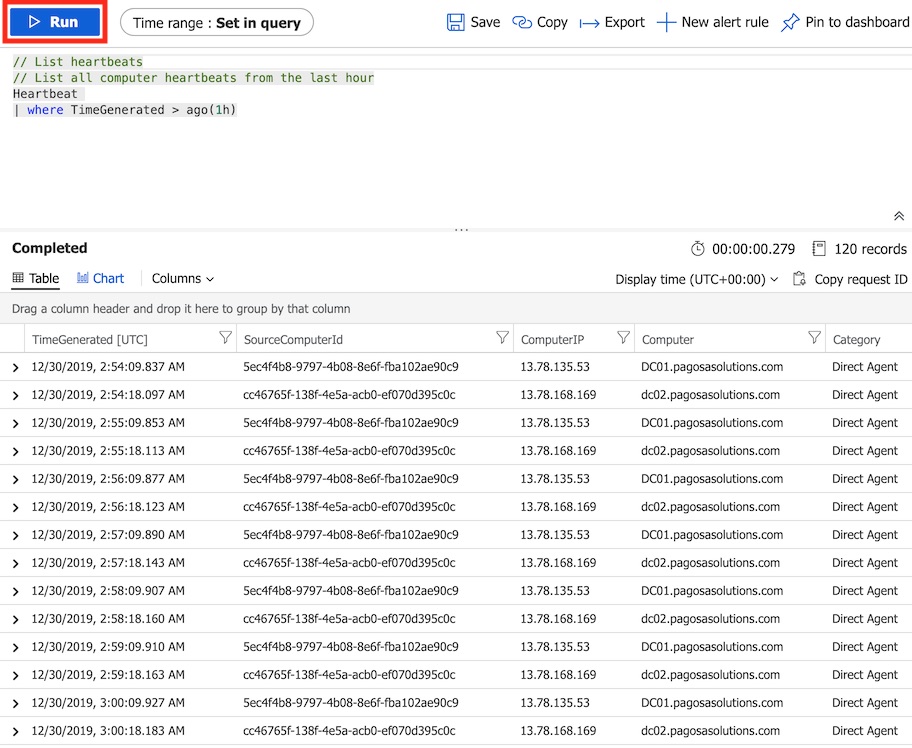

Queries are used to sort through and analyze relevant information from all of the log and performance data you’ve been gathering up to this point. You can find Logs in the main menu for Log Analytics.

If you are new to using Kusto Query Language (KQL) or any query language, it will take a little practice to properly query information from log data being ingested. You can find much more information about KQL and useful queries, but this post will be enough to get you started with a few basics. It’s usually easiest to start with pre-built queries and modify them to meet your specific requirements for monitoring.

Microsoft has two very helpful tutorials on how to get started with Log Analytics and Log Queries. Both are excellent and have lots of sample queries:

- https://docs.microsoft.com/en-us/azure/azure-monitor/log-query/get-started-portal

- https://docs.microsoft.com/en-us/azure/azure-monitor/log-query/get-started-queries

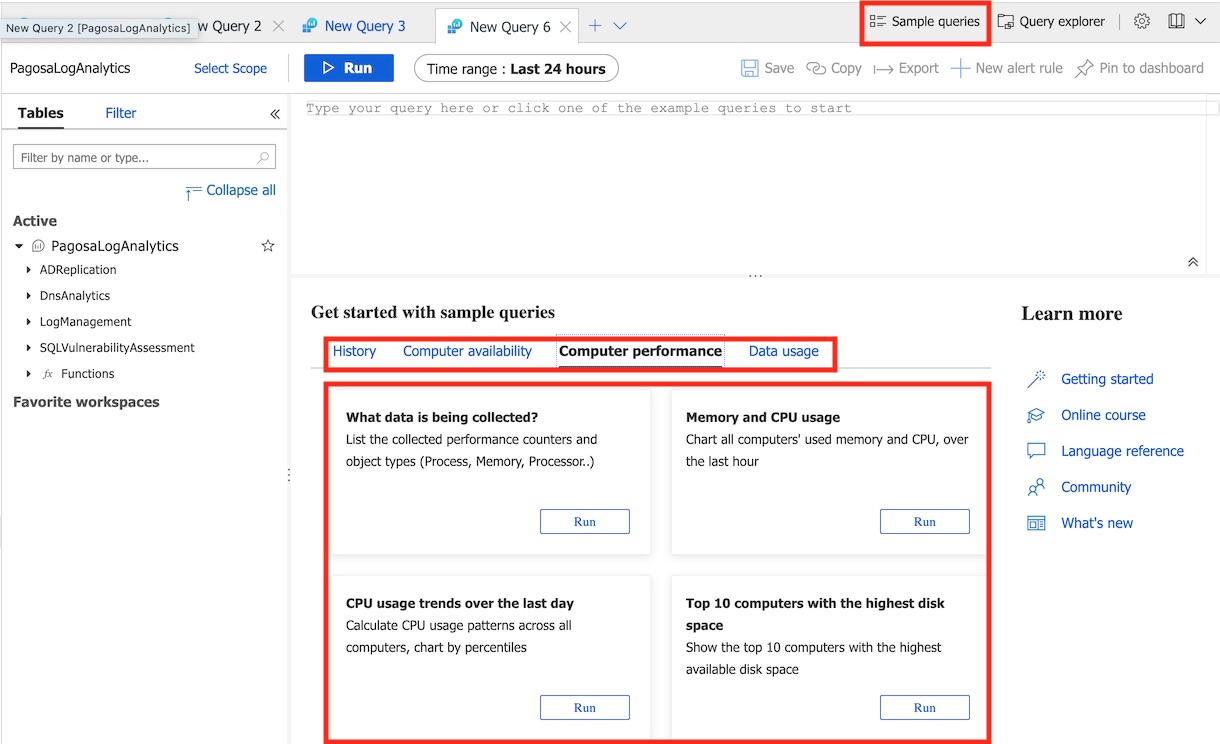

When you open a new log query, there are a few tools to help you start. There are “Sample Queries” button in the top menu will bring up several ready-to-go queries to quickly retrieve things like CPU usage and Memory Utilization. Click the Run button for any query to paste it into the query input box, where it can be further modified. You can also check the language reference for support on syntax.

Run the query to immediately process all collected logs. In this case, we’re querying CPU usage over the past hour.

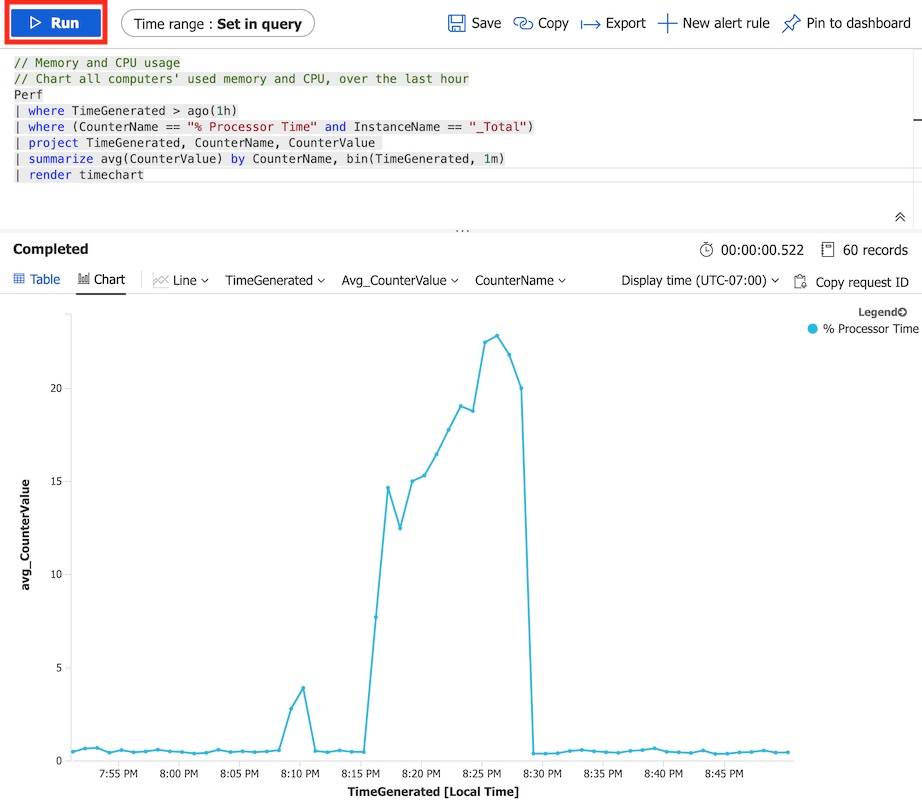

In the next query, we retrieve a simple table of all agent heartbeat check-ins in the past hour.

Here are a few more queries that I have saved that you may find useful.

Query to return all logical disks that have less than 20% free space:

Perf| where ObjectName == “LogicalDisk” and CounterName == “% Free Space”| summarize FreeSpace = min(CounterValue) by Computer, InstanceName| where strlen(InstanceName) ==2 and InstanceName contains “:”| where FreeSpace < 20| sort by FreeSpace asc

Query to return CPU usage for all servers in the past 4 hours to a graph:

Perf| where TimeGenerated > ago(4h)| where CounterName == @”% Processor Time”| summarize avg(CounterValue) by Computer, bin(TimeGenerated, 15m)| render timechart

Query to return all application errors in Event Viewer:

Event| where EventLog == “Application”| where EventLevelName == “Error”| project Computer, TimeGenerated, RenderedDescription

Alerts

Up until now, we’ve only been analyzing data that has been gathered from systems. Now we need to start working on the response action in the Azure Monitor process. Alerting allows us to look for certain conditions to trigger actions like an email/text notification, an Azure Function, a push notification, etc. This is where you start getting into automation and where the real power of Log Analytics lies.

Again, this guide will only get you started with alerting, but it should help you set alerts that most monitoring tools would perform.

The mechanics of alerting for Log Analytics is simple. It’s all query-based, just like the queries used in the previous step. A query simply serves as the condition for an alert to fire off. Of course, you should modify your alert queries to return only the most critical information that you want to be notified for. In most monitoring environments, this would be things like system heartbeat monitoring, free disk space, high CPU/memory usage, or errors in specific event logs. Also consider that creating too much alert noise will quickly make them all irrelevant.

Before creating any alerts, we are going to import a starter pack of core alerts that Microsoft has created and used internally to help migrate their monitoring solution from SCOM to Azure Monitor. These are an excellent starting point for any implementation. You can find the Microsoft Alert Toolkit on GitHub here. Instructions to install the toolkit are documented on the GitHub repository, but we will run through them now.

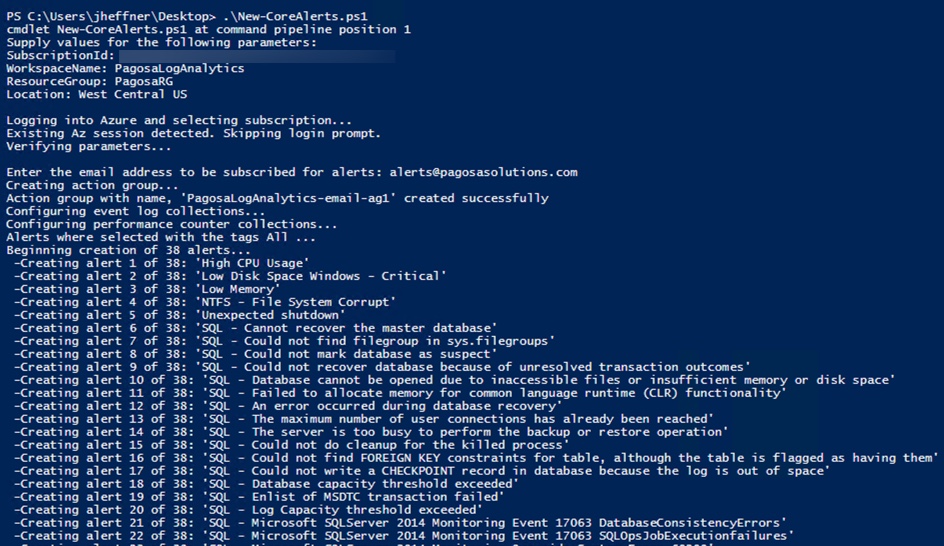

To import the Alert Toolkit directly into your workspace, download both DefaultAlertConfig.json and New-CoreAlerts.ps1 to a local system with PowerShell.

Install the Az PowerShell module required to connect to Azure.

Install-Module Az

Accept the NuGet provider and accept any install prompts. This will take a few minutes if you don’t already have it installed already.

Log into your Azure subscription:

Login-AzAccount

Next, run the Alert Toolkit script from GitHub:

.\New-CoreAlerts.ps1

You will be prompted for information from your environment.

- SubscriptionID: found under Subscriptions in the Azure Portal

- WorkspaceName: the name of the Log Analytics workspace you created

- ResourceGroup: the Resource Group name where your workspace is located

- Location: location of your workspace (“West Central US” in this example)

You will also be prompted for an email address to use initially for the alert action group. All of the rules should import in a couple minutes.

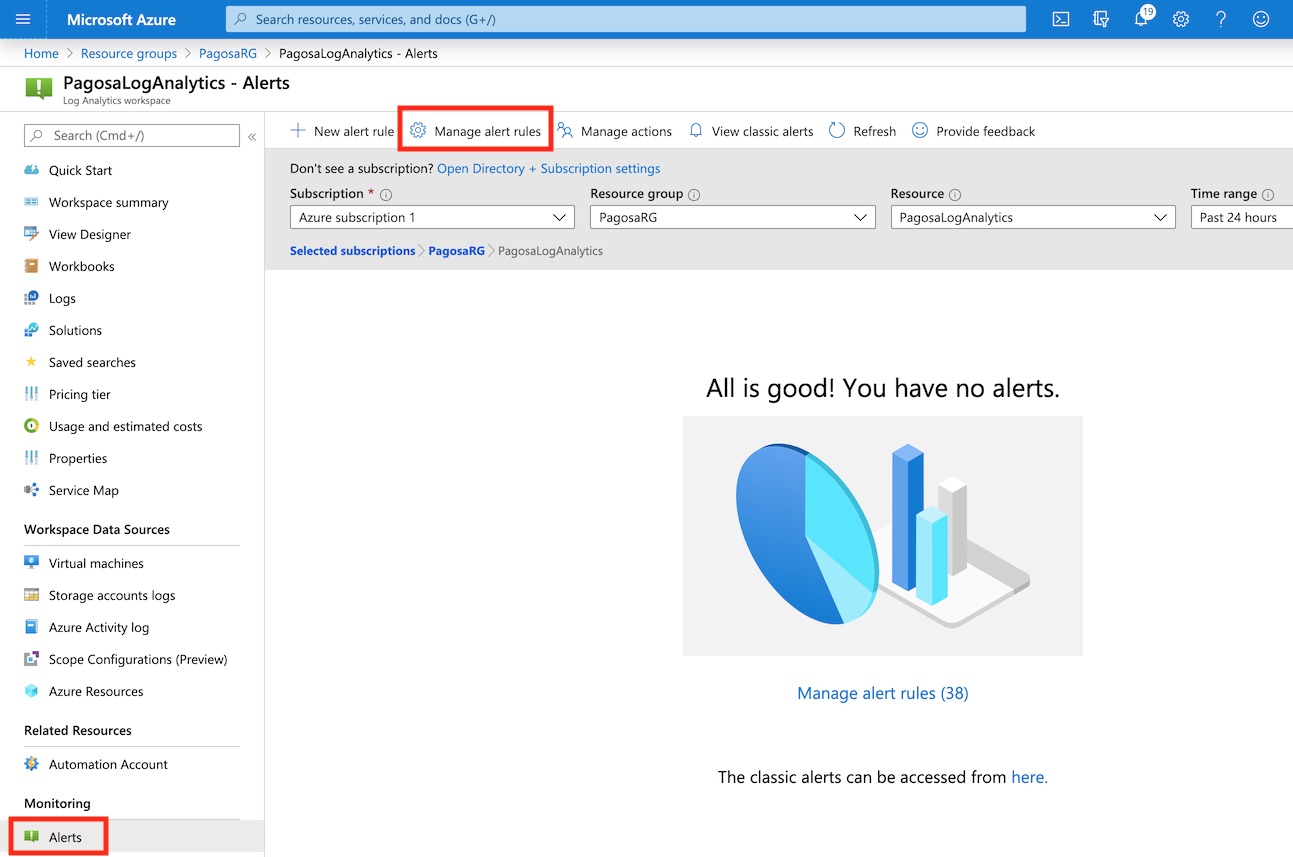

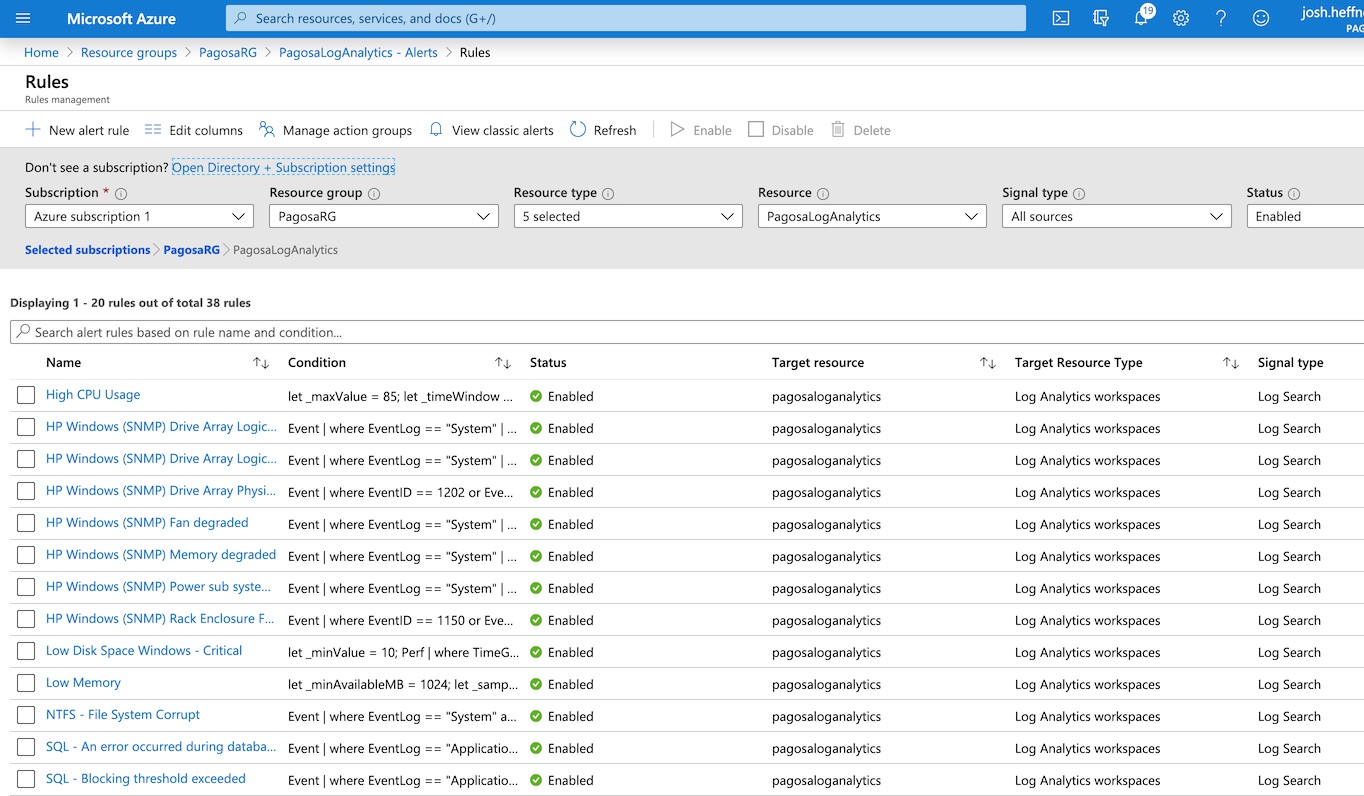

When the import has completed, open the Alerts blade of Log Analytics. Open Manage Alert Rules from the top menu.

Here you can see all the Alert Rules that were just imported. You can enable, disable and modify any rule from here.

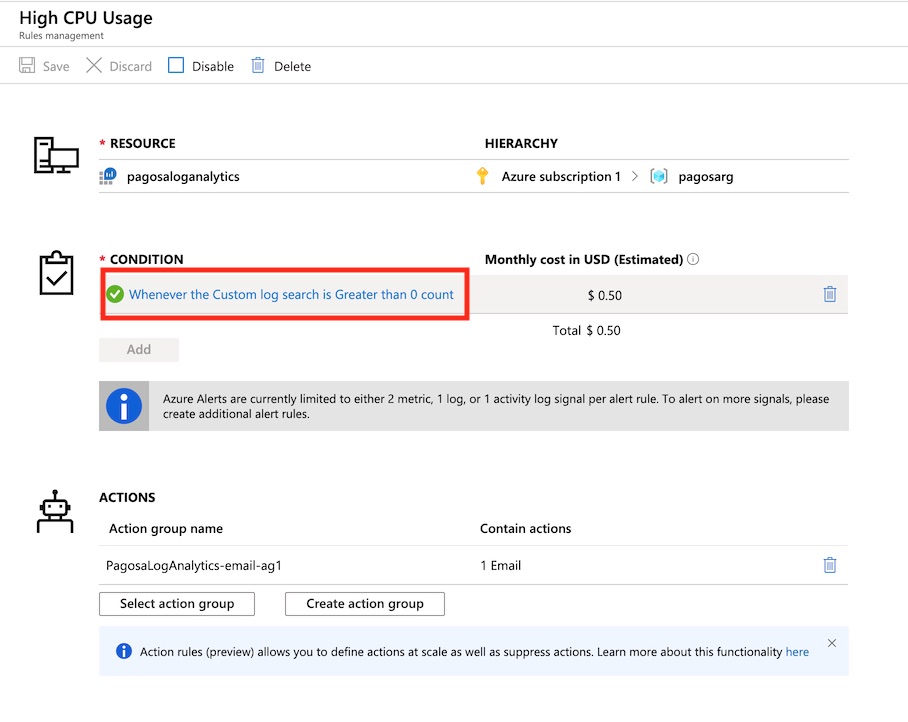

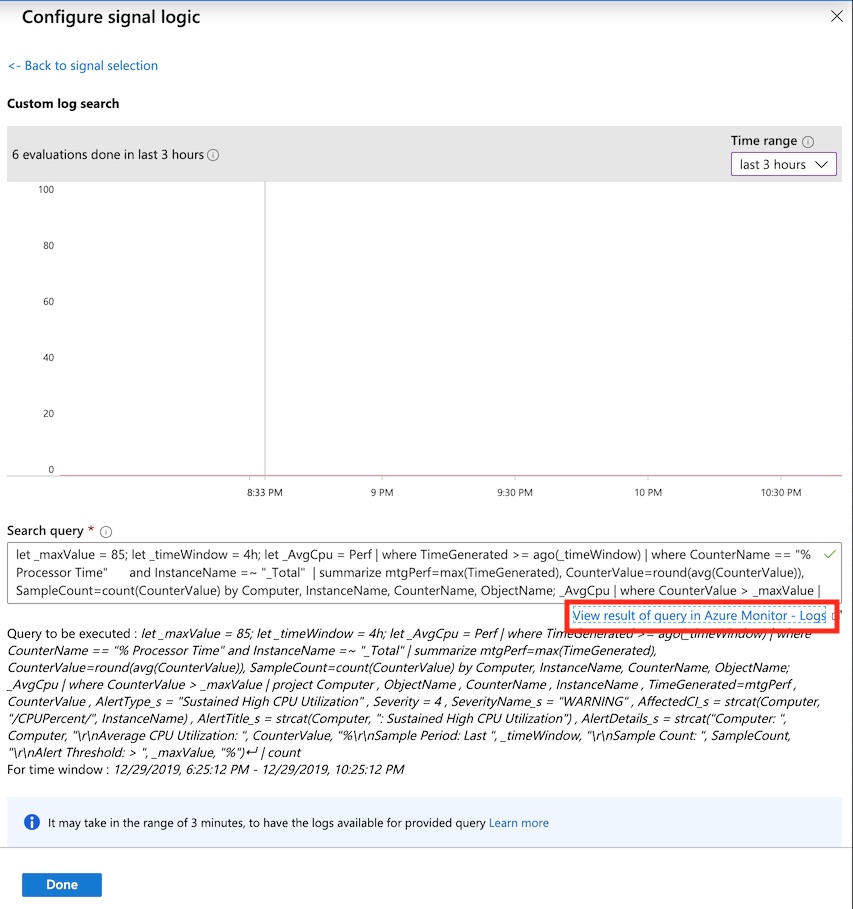

It might help to think of alert rules in two parts – a condition that gets triggered and an action to follow in response. Open the condition to view the log query used.

Here you can view the logic used for the rule. You can also view live results of the query.

You can customize each query as needed. Here’s a few examples of how you could modify a query:

//Limit the query to specific computer(s)

| where Computer contains “<computername>”

//Limit the query to a group of computers

| where Computer in (ProductionServers)

//Limit by OS type

| where OSType == “Linux”

//Limit to a specific age

| where TimeGenerated >= ago(15m)

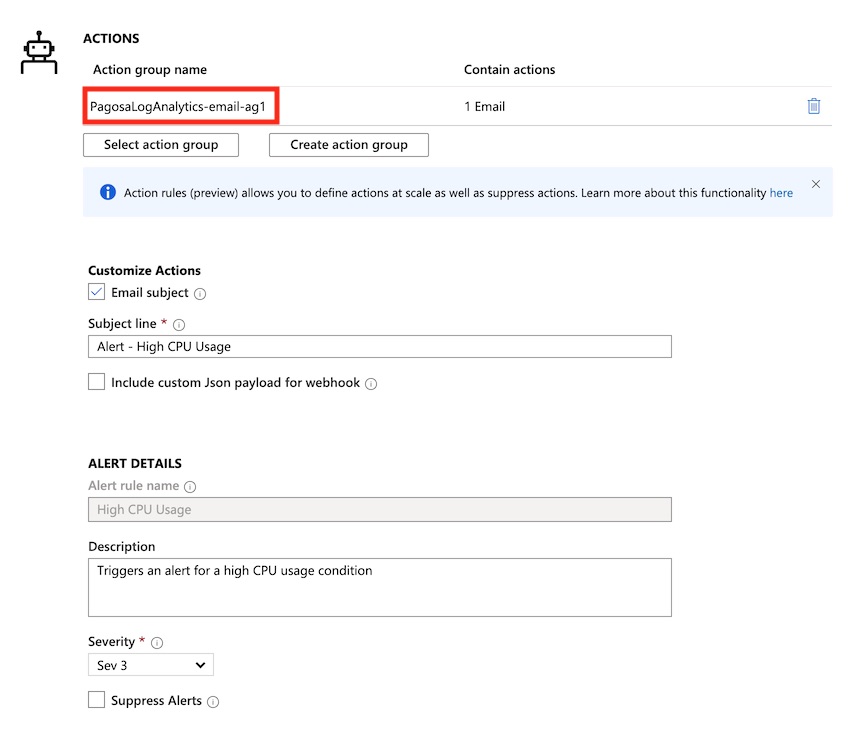

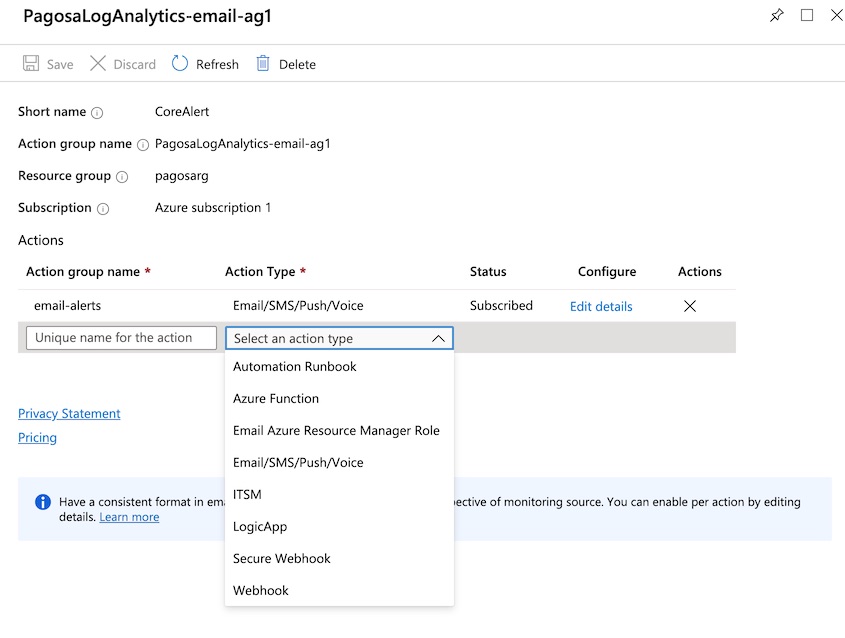

The second part of the alert rule is the action. The existing email action was created from the Alert Toolkit import but can easily be modified. You can also edit the email/detail properties here.

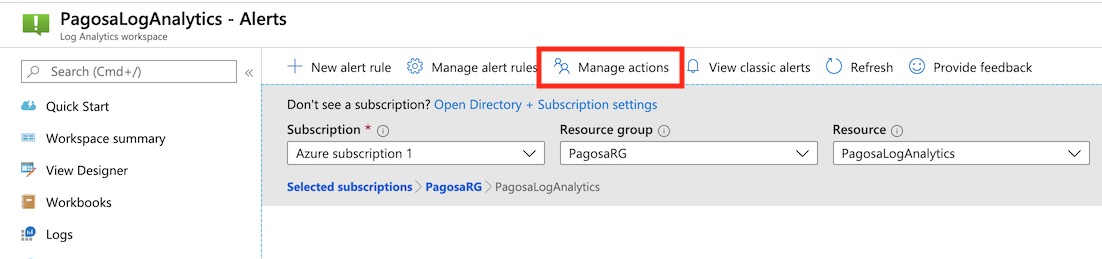

Back in the alerts blade, you can view the alert action group settings by opening Manage Actions.

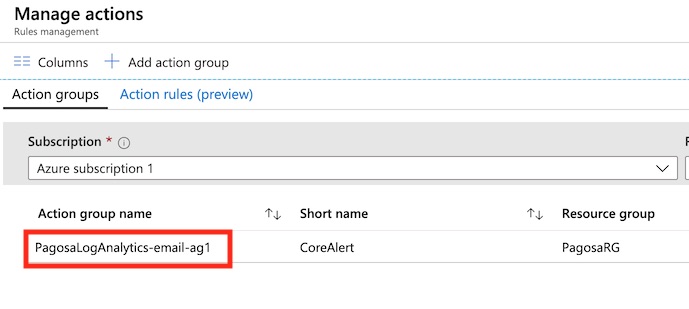

Here you can see the action group created from the import. Open it to change its settings.

This alert is already set for email notification, but there are several other options for alert actions.

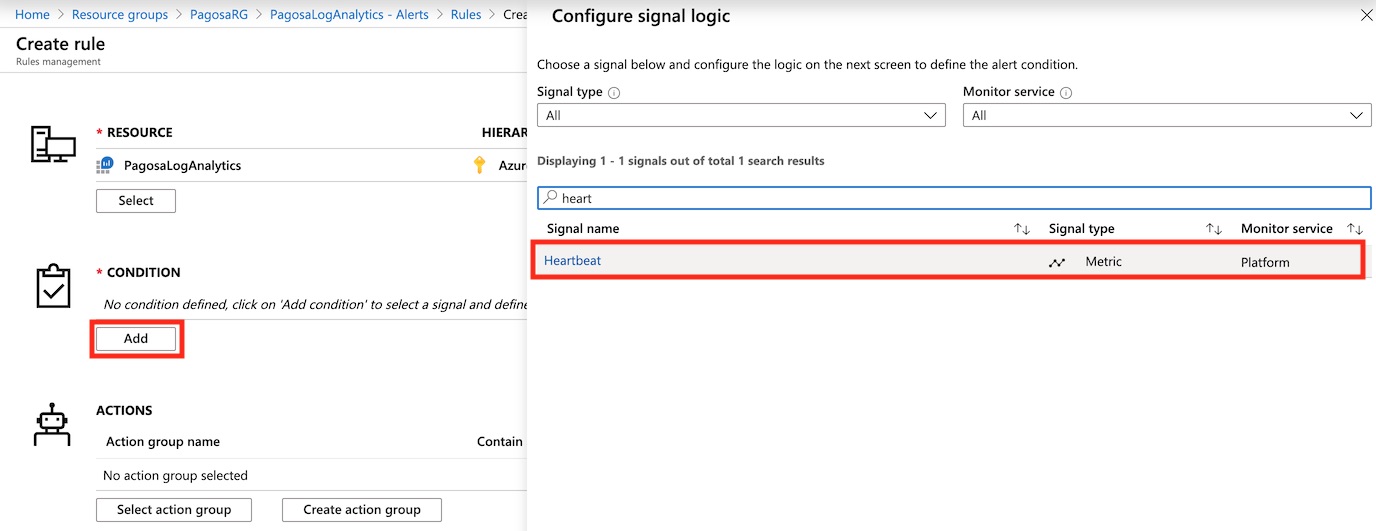

One critical alert rule that isn’t included in the toolkit is server heartbeat (up/down). Let’s create a new rule for this. Add a condition and search for the Heartbeat metric, then open it.

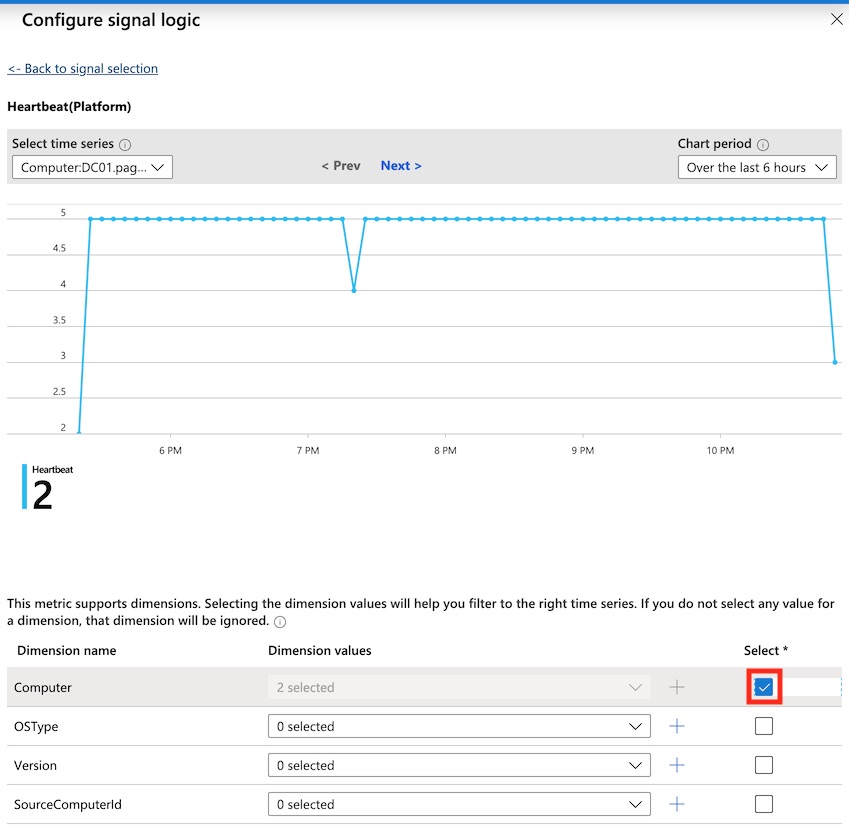

Check the box for dimension, which scopes this alert rule to all computers.

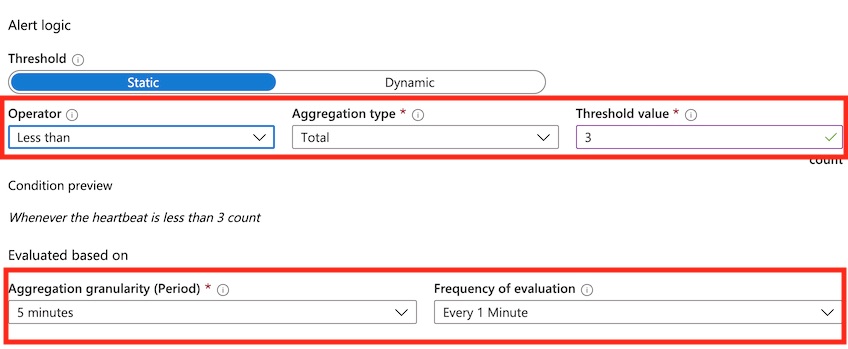

Adjust the alert logic to a threshold that won’t cause a false positive, but will also catch heartbeat drops as fast as possible. This may take some tweaking. While log/metric aggregation happens in real-time, there can be delays that could cause this alert to misfire if the threshold is too strict. I like to use the settings below to monitor server availability.

In short, this rule will evaluate the last 5 minutes, every minute, to see if a heartbeat occurred less than 3 of 5 times.

Alerting will take some trial and error based on how sensitive your rules are and exactly what you want to monitor, but it’s easy to make adjustments. I would recommend creating a pilot group of servers to monitor and focus on getting the alerts just right for them, then expanding your scope from there.

Monitor Metrics

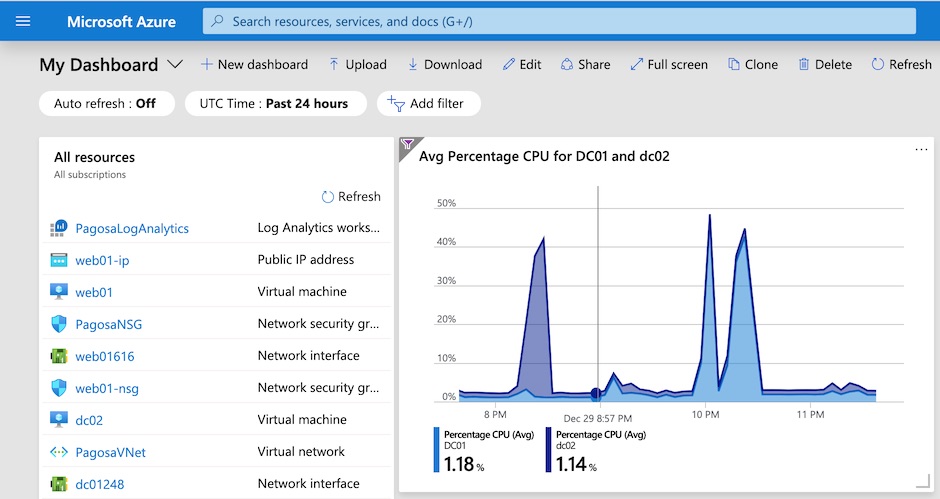

Let’s briefly look at how you can create a metrics dashboard from the ingested metrics data from our VMs. These graphs can be generated from Log Analytics queries, but they can also be created in Azure Monitor Metrics and then saved to a dashboard in the portal. This is a great way to look at live and historical performance metrics across your environment.

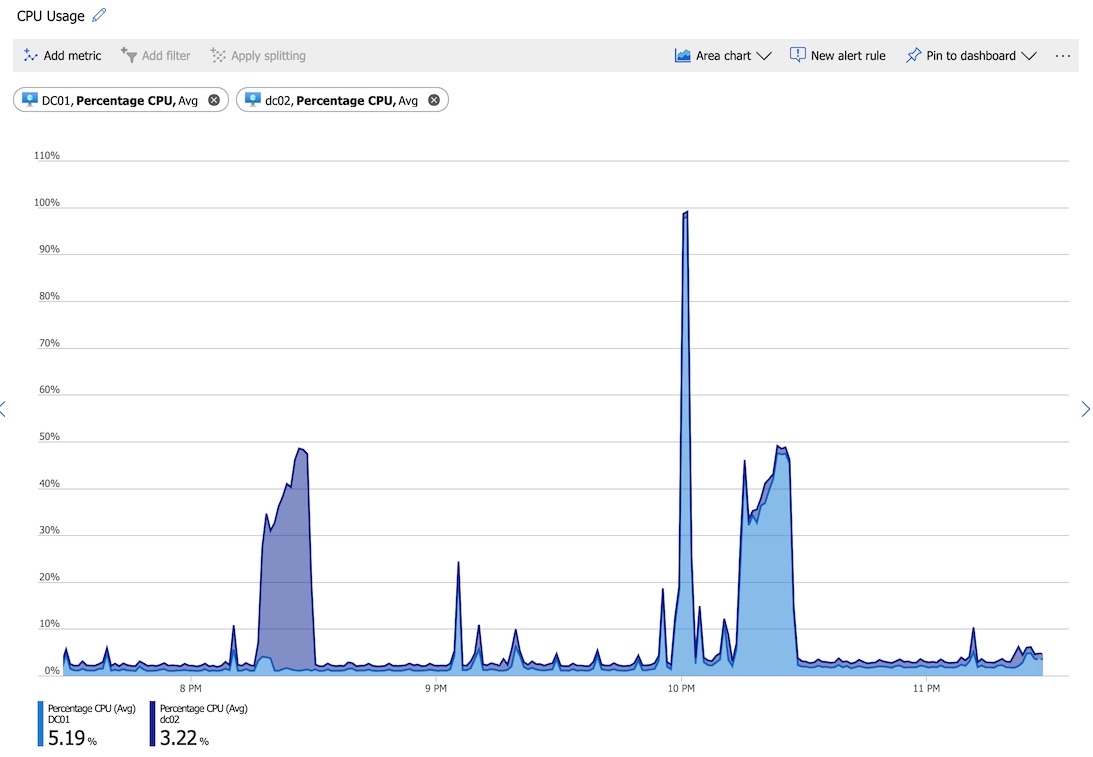

Here’s an example of a simple graph with CPU usage for two VMs in this environment:

This graph can be used to quickly identify trends and analyze resource utilization of your systems.

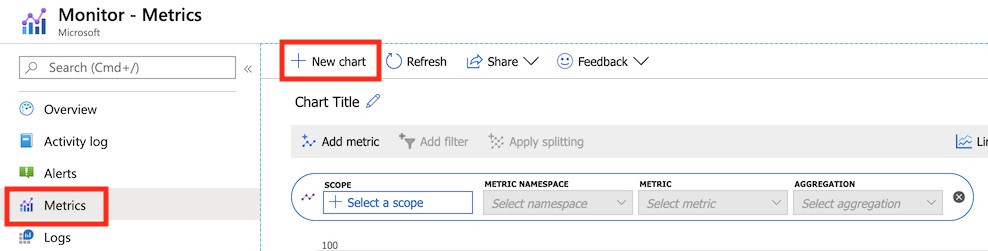

To build it, open Azure Monitor from the portal and choose New Chart.

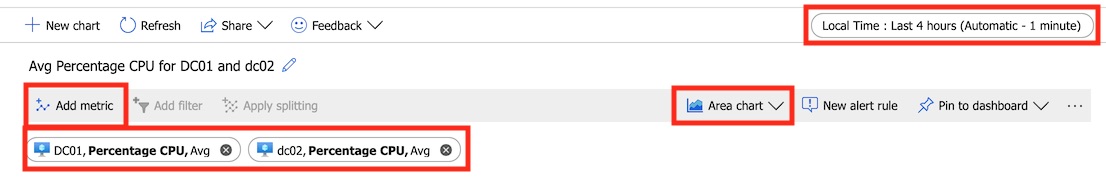

Create a scope by selecting the item you want to monitor, choose the metric, and adjust the time frame.

Once the metric graph is dialed in how you like, you can export it to a file, share a link to it, or pin it to a dashboard for easy use. The ability to generate visually rich and informative dashboards quickly using Azure Monitor makes it a very useful tool for daily operations.

Summary

This post was a lot of information! Azure Monitor and the alerting capabilities of Log Analytics can be very useful in any environment by quickly providing relevant information. While it can perform the standard duties of any monitoring solution, it does much more as a modern tool built on DevOps. It does require some time to test, tweak, and perfect for production-level monitoring, but its modern capabilities and cost savings make it a worthy successor to SCOM and other server-based monitoring solutions.